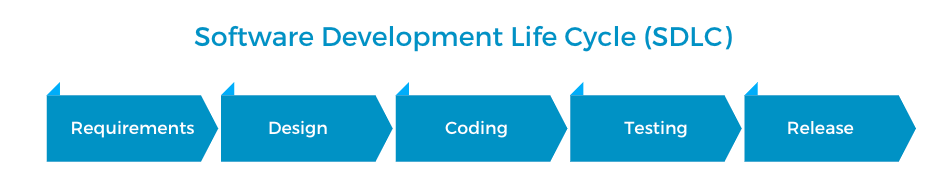

Using the Software Development Life Cycle (SDLC) as a model to secure your application

If you are into building software, you’ve probably heard of the software development life cycle (SDLC). The SDLC describes the five stages of application development: the requirements phase, the design phase, the coding phase, the testing phase, and the release phase.

- Requirements phase: You start by listing out what you need to build, or your application’s “requirements”. So if you are building a messaging app, you’ll probably need a way to let users sign up, let them log in, let users send private messages, and so on. Understanding exactly what you need to build will help you design your application in the next phase.

- Design phase: In this phase, you take the application requirements from the last step and plan out the structure of your application.

- Coding phase: In the coding phase, you actually build out application functionalities.

- Testing phase: You test the application in terms of functionality, usability, and security.

- Release phase: Finally, you release the application to users and maintain it over time.

What does this have to do with application security?

So, what does all this have to do with application security?

When we look at the SDLC, we also see five distinct chances of integrating security measures into the development process, or five chances to make sure that your application is as secure as possible!

Requirements

Let’s start with the requirements phase. When you are identifying the functional requirements of the application, you should also identify the security requirements of those functionalities. For instance, if you need to transport data, do you also need to store and transport sensitive data? Do you need to take in user input and process it? If so, you might need to implement input validation. Will this app perform risky functionality such as file uploading?

Design

The next step is to plan the application design around those security requirements. You can take the security requirements that you listed out in the requirements phase, and figure out how to design the application around these requirements. You’re typically asking questions like: How should we implement authentication and authorization? How do we handle user input safely? What kind of input validation should we use on different kinds of input? Where do we backup data and code? What protocols or encryption should we use to store and transport data safely?

The key is to design the app securely by considering security requirements along with its functional ones.

Coding

For the coding phase, put in place measures to ensure that development is being done securely. One of the first thing to do is to choose a secure programming language and framework. You can also implement policies and guidelines of how to handle untrusted data safely via validation, sanitization, and output encoding. Having a standardized policy for how to deal with these situations will make sure that each potential weak point in the application is properly handled.

During this stage, you should also employ a static analysis tool (SAST) to continuously scan your code during development and get rid of security vulnerabilities as much as possible. Static analysis tools automatically identify signs of various vulnerabilities in your code so that you can fix them right away. This will make things a lot easier during the testing phase.

Testing

And next up in the testing phase, implement a wide variety of security tests to test your application’s implementations and making sure no severe bugs make it to production. You can run dynamic scanning tools, conduct a penetration test, or conduct a manual security code review, for instance.

You should also test your application against a software composition analysis (SCA) tool. SCA tools keep track of an application’s dependencies and alert you if publicly disclosed vulnerabilities are found in your application.

Often, we won’t start to consider security until the testing phase. But this phase should not be where your security effort starts. It should be a fail-safe to catch vulnerabilities that somehow slip past security protocols implemented earlier during your development cycle instead.

Release

And finally after release, you can build in routine security tests, such as static analysis scans and SCA, to monitor the application after deployment. You can also consider starting a bug bounty program or vulnerabilities disclosure program to let third-party security researchers safely report security bugs in your application.

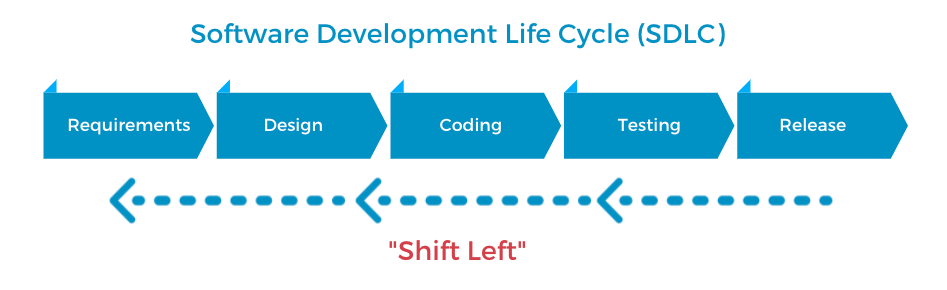

“Shift left” security

This entire process is what people mean when they say “shift left”. “Shift left” security means to perform security testing and checks earlier rather than later in the SDLC. Instead of integrating security considerations into only the later phases of software development like testing and release, we should incorporate them into the earlier stages of requirement, design, and coding.

By considering security during the application’s requirements and design phases, you can plan out your security measures before significant effort is wasted in implementing insecure designs and architectures. And by conducting security testing throughout the coding phase too, you can find security flaws before a feature becomes more complicated and integrated into the rest of the application.

Security does not start after you finish writing code, instead, it should be a constant consideration before, during, and after your work in the IDE.

Thanks for reading! What is the most challenging part of developing secure software for you? I’d love to know. Feel free to connect on Twitter @vickieli7.