Introduction

Caching is often likened to a magician’s sleight of hand, making web applications run seamlessly and swiftly. But as with any magic trick, it can lead to unintended consequences if not executed precisely. In the vast world of web development, understanding cache control is important. It bridges performance and security, ensuring your application remains efficient and safe. Let’s dive deep into the intricacies of cache control and discover how to harness its power for enhanced security.

The Basics: What is Cache-Control?

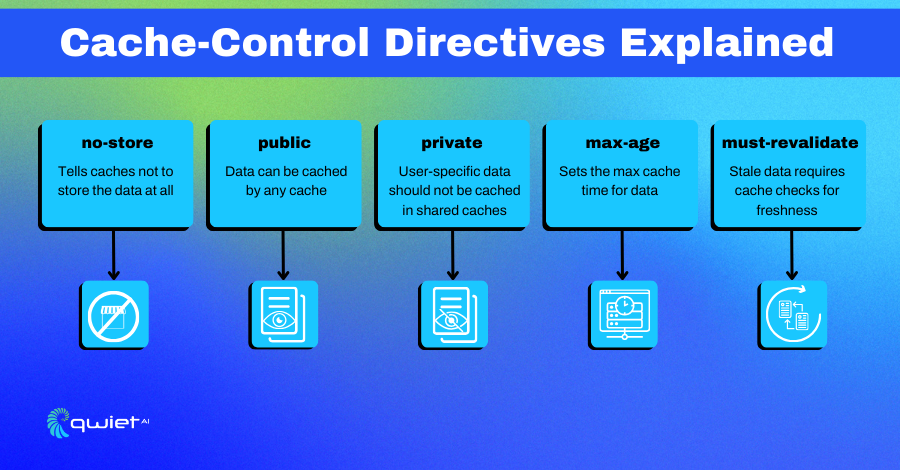

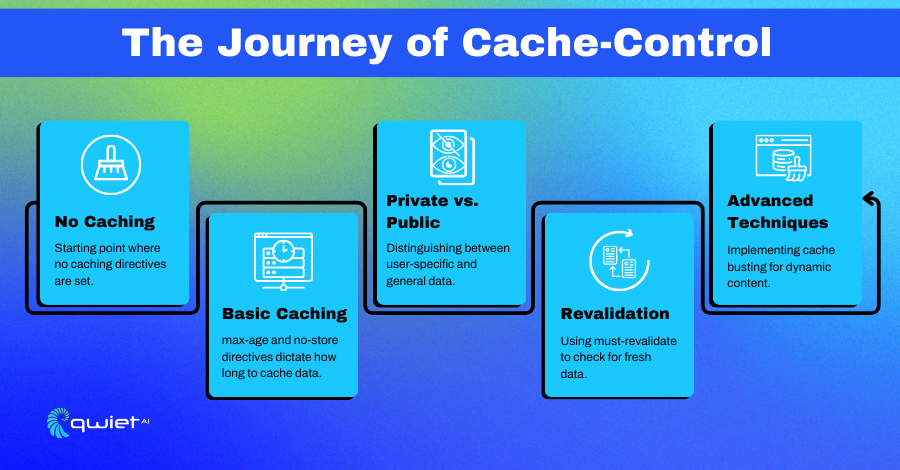

Cache-Control is more than just an HTTP header; it’s a directive, a command, and a way to communicate with browsers and intermediary caches about how they should treat your web content.

Think of it as a set of instructions guiding these entities on how long to retain specific data or whether to store it at all. By mastering Cache-Control, developers can ensure that their content is delivered efficiently without compromising security.

Code Snippet: Basic Cache-Control in Express.js

| const express = require(‘express’); const app = express(); app.use((req, res, next) => { res.set(‘Cache-Control’, ‘no-store’); next(); }); |

In this snippet, we use Express.js to set a Cache-Control header with the no-store directive. This is like putting a ‘Do Not Enter’ sign on your data, telling all browsers and caches that they should not store this data under any circumstances. This is particularly useful for pages that display sensitive information like passwords or personal data.

Why Cache-Control Matters for Security

Caching, while a boon for performance, is a delicate balance. It’s akin to a tightrope walk where, on one side, the advantage of speed and, on the other, the potential pitfalls of security breaches.

When not managed correctly, cached data can become a treasure trove for malicious actors, revealing sensitive information or serving outdated, misleading content. Hence, understanding and implementing the right Cache-Control directives is paramount for any secure web application.

Code Snippet: Cache-Control for Public and Private Data in Express.js

| app.get(‘/public’, (req, res) => { res.set(‘Cache-Control’, ‘public, max-age=3600’); res.send(‘This is public data.’); }); app.get(‘/private’, (req, res) => { res.set(‘Cache-Control’, ‘private, max-age=0, no-store’); res.send(‘This is private data.’); }); |

Here, we’re setting Cache-Control headers for different types of data. We use the public directive and a max-age of 3600 seconds for public data, allowing it to be cached for an hour. For private data, we use the private directive along with no-store to ensure that this data is never cached. The max-age=0 ensures that even if the data somehow ends up in a private cache, it becomes stale immediately.

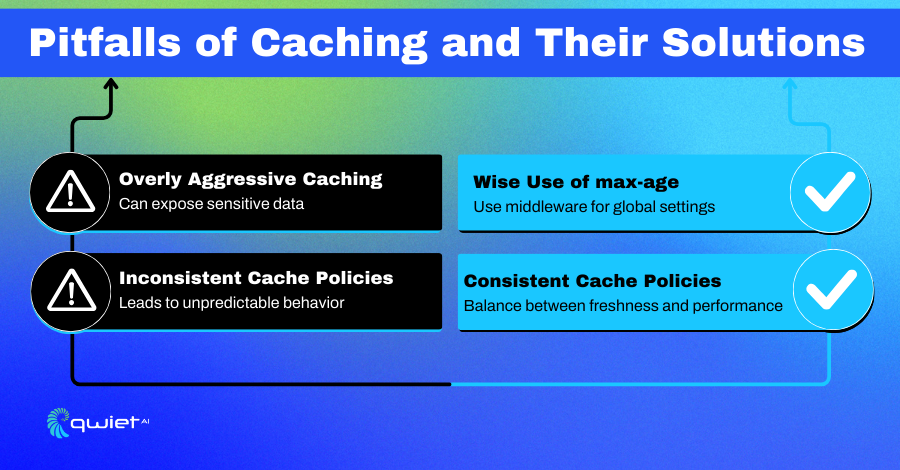

Common Pitfalls and How to Avoid Them

Overly Aggressive Caching

In the quest for performance, it’s easy to fall into the trap of aggressive caching. However, this approach can sometimes backfire, leading to potential security risks. Serving stale data or inadvertently revealing sensitive information are just some of the hazards of not setting the right cache parameters.

Countermeasures:

- Use the max-age directive wisely.

- Use must-revalidate for critical resources.

- Ensure sensitive routes have appropriate no-cache or no-store directives.

- Implement cache purging strategies for outdated content.

Code Snippet: Using must-revalidate in Express.js

| app.get(‘/sensitive’, (req, res) => { res.set(‘Cache-Control’, ‘private, max-age=60, must-revalidate’); res.send(‘This is sensitive data.’); }); |

In this example, we’re using the must-revalidate directive. This means that once the cached data is 60 seconds old, the cache must check with the origin server to see if the data has changed. If it has, the cache must update the data before serving it to the client. This is a good way to balance performance and security for sensitive data.

Inconsistent Cache Policies

Inconsistency in cache policies can be likened to a ship without a compass, leading to unpredictable behavior and potential vulnerabilities. With a standardized approach, different parts of an application might have conflicting cache settings, leading to clarity and potential security breaches.

Countermeasures:

- Use middleware to set global cache policies.

- Regularly audit your cache settings.

- Implement a centralized cache management system.

- Educate development teams on best practices for cache settings.

Code Snippet: Global Cache-Control Middleware in Express.js

| app.use((req, res, next) => { if (req.url.startsWith(‘/private’)) { res.set(‘Cache-Control’, ‘private, max-age=0, no-store’); } else { res.set(‘Cache-Control’, ‘public, max-age=3600’); } next(); }); |

Here, we’re using middleware to apply a consistent Cache-Control policy across our application. This middleware checks the URL path and sets the Cache-Control header accordingly. This ensures that your caching behavior is consistent and predictable, reducing the chance of accidental data exposure.

Advanced Techniques

Cache Busting for Dynamic Content

Dynamic content is ever-evolving, and serving outdated versions can lead to a subpar user experience or misinformation. Cache busting is the antidote to this problem, ensuring that users always receive the most recent version of the content.

Code Snippet: Cache Busting in Express.js

| app.get(‘/dynamic-content’, (req, res) => { const version = new Date().getTime(); res.set(‘ETag’, version); res.send(`This is dynamic content. Version: ${version}`); }); |

In this example, we’re using the ETag header to set a unique identifier for each version of the content. The ETag is set to the current timestamp, ensuring each request has a unique ETag, forcing the browser to fetch a fresh copy every time.

Conclusion

Cache-control is a multifaceted tool bridging the gap between performance and security. It’s not just about making your application run faster; it’s about ensuring that every piece of data served is timely and secure. As we’ve seen, a well-configured Cache-Control can be a formidable ally in the quest for a robust web application. Take control of your Cache-Control today, and ensure your web application is lightning-fast and impregnable in its security. Interested in further fortifying your application? Book a demo with Qwiet.ai now.